i’m working on my raspberry pi based time displacement project rn and there is kind of a regular bit of downtime when recompiling and debugging things on the pi so thought id write down some stuff on the basic concepts while shits all make && make running whoops “SEGMENTATION ERROR EXIT CODE” etc

Id like to make a distinction between slitscanning and time displacement in this context here. Slitscanning is a process originated in film photography where only a tiny portion of film for 1 picture gets exposed at any given time, typically via some kind of mechanical apparatus that sits over the lens and restricts light flow This allows one to capture a wide range of time slices into one singular photograph.

if one has ever played with opening shutters in low light scenarios and goofing around then you’ve got a pretty decent idea already of how this works. one can do some pretty crazy stuffs either by leaving a shutter open in extremely low light and then slowly moving around the frame and/or by using flashlights/laser pointers to expose portions.

this method works extremely well for still photography but proved somewhat cumbersome to abstract for video usage in the old analog days. Famously the climax of 2001 featured slit scan effects to create the multidimensional tunnel sequence thing but the thing to note about how this was managed is that each singular Frame for that sequence was created as a slitscan photograph and then each photograph was used for essentially stop motion animating the sequence. more details on that process over here!

The main problem for abstracting slitscanning into something that works as an animation was (and to a certain extent still is) memory! without some kind of a digital framebuffer around to store rather massive amounts of video inside folks had to just resort to the old school analog ‘framebuffer’ i.e. just fucking filming it. this is a pretty common issue in video tool design, video is fundamentally a multidimensional signal and the more dimensions a signal has the more difficult it is to scale operations from 1 time slice (still image) to any given number of slices.

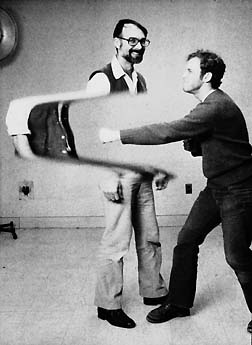

but wait what about this?

t clearly says ‘slit scan video’ and looks like there some video things happening! and its all written in Processing so can’t be that difficult to manage! Yes it is true that portions of this image are updating at video rate from a live video input! however compare that to this

and we can see that there is something pretty fundamentally different happening here.

In shiffmans vid up above we can see that we are updating just one single line of video pixels at any given point in the video. This is more like the old school slitscan photography technique abstracted with some simple digital logic but we don’t exactly get a video feel out of it as an overwhelmingly significant of the pixel informations on the screen are essentially static in time as soon as they get written into the draw buffer.

In the zbigniew film we can see that EVERY single pixel is getting updated in every single frame allowing for an intensely surrealistic experience! This is the real deal time displacement. This is also pretty massively bulky to manage in terms of memory as in order to not have visible quantization artefacting zbigniew had to painstakingly reassemble the film over a course of months at a temporal resolution of 480 horizontal lines so that the top line of pixels in each frame is the most ‘recent’ time slices and the bottom line of pixels in each frame is from 480 frames in the past (assuming this was at 24 fps means that there is a 20 second difference from top to bottom in each frame).

i’ve long wanted to abstract this kind of process into something that could work as a general purpose real time av tool for digital and analog video stuffs and i’m making pretty good progress on my thing so far. Even with modern microcomputers/nanocomputers working at SD resolutions it can get pretty tricky to keep things from slowing down into mush or just crashing hard at certain resolutions so i’ve been working on seeing how low res i can go and still have some fun zones. Right now for both the deskop and rpi versions i’m working on i can get at least 480 frames of past video stored but the caveat is that the video inputs are natively working at 320x240 and that the video information is being stored as low level pixels at the depth of chars meaning that one can’t really exploit any sort of GL parallelism to speed things up, its currently a very brute force affair. To control how time displacement works in these instead of working with any kind of fixed gradient mapping i built some low res oscillators so that one can sort of oscillate a video through time.

these things are still pretty raw in developement and i still have a fair amount of spare knobs and shit i can devote to controling things on the rpi version so i’m interested to hear what anyone might want to see out of this kind of thing. something im still working out is a ‘freeze’ function that grabs a contiguous slice out of the 480 frames and freezes the input at that point so you can have time loops to work with. controls for quantisation and some hacky attempts to smoothing things out are all in process as well!

Looking at this with great interest. I find this kind of image distortion fascinating and I’d love to add this to my toolbox. Personally, I welcome any compromises about resolution or color depth if they allow for a raspberry to produce such magic (I’m currently working at 576p anyway, often with B/W sources at even lower resolution)

Looking at this with great interest. I find this kind of image distortion fascinating and I’d love to add this to my toolbox. Personally, I welcome any compromises about resolution or color depth if they allow for a raspberry to produce such magic (I’m currently working at 576p anyway, often with B/W sources at even lower resolution)